How AI is exposing cybersecurity weaknesses in emerging markets

- Details

- Category: Cybersecurity

- 742 views

In the 5G era, cybersecurity vigilance is standard, but many operators and businesses have still struggled to withstand the constant barrage of attacks. In the past year, even colossi such as CloudFlare have fallen victim, and now that AI enables cyber threats to mutate beyond established legacy defences with alarming speed, companies worldwide are struggling to keep up.

AI-based threats are uncharted territory, rapidly evolving and acting autonomously. Mauricio Sanchez, Senior Director of Enterprise Security and Networking at Dell’Oro, explains that while legacy cybersecurity tools remain important in addressing these threats, they were built for a more predictable threat model.

“AI expands the attack surface to include prompts, models, data pipelines, agents, and automated workflows”, says Sanchez. “AI-based threats do not always look like traditional malware or intrusion behaviour – a prompt injection attack can manipulate an AI system without dropping a file, and data poisoning can compromise outcomes before a model is deployed. Agentic AI raises the stakes because the system may not just respond; it may take actions through APIs, SaaS tools, or enterprise workflows.”

Hakim Hamane, Managing Director at BCG Platinion Casablanca, agrees that AI has enabled a rapid evolution of cybersecurity threats – and notes that this is driving the sophistication of malicious attacks, leaving technology firms playing catch-up as AI becomes increasingly autonomous.

Sanchez concurs, saying: “The problem is not that legacy security tools stop working – it’s that AI creates new control points that those tools were never designed to see. The implication is that enterprises need visibility into the data, model, prompt, identity, and tool-use layers, not only the endpoint or network layer.”

Emerging markets, developing threats

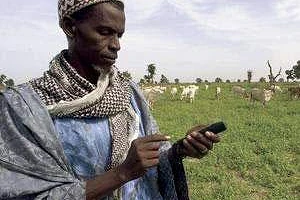

The effect here could be felt more acutely in emerging markets, he notes, because attackers gain access to global AI capabilities immediately, while many defenders still operate under local budget, staffing, regulatory, and infrastructure constraints. AI lowers the cost of phishing, fraud, impersonation, reconnaissance, and exploit development – this would have a major impact virtually anywhere in the world, but it will be felt even more severely in regions where SOC (security operations centre) maturity, incident response capacity, and cyber skills are still developing.

Sanchez notes that with many emerging markets rapidly adopting mobile banking, digital government, cloud services, and telecom-led platforms, the attack surface for cyberattacks is expanding faster than security maturity can keep pace. In effect, the advance of AI is compressing this timeline - markets that were still closing basic security gaps are realizing that they must now also defend against AI-enabled attacks. Sanchez emphasises that this is not to suggest that emerging markets are standing still or getting left behind – many are improving rapidly. However, AI advances are raising the minimum level of cybersecurity capability needed to operate safely in a digital economy.

Defence against the dark AIs

So, what can companies do to protect themselves? In the near term, Sanchez argues that enterprises must regain visibility and control, while in the longer term, they must rebuild their security architecture around identity, data, AI systems, and automated workflows.

The immediate priority, says Sanchez, must be practical: inventory where AI is being used, what data it touches, which users and agents can access it, and which external models or APIs are involved. Enterprises then need disciplined execution around MFA, least privilege, patching, email security, DLP, logging, and incident response. For many emerging-market enterprises, managed security services and cloud-delivered controls may be the fastest way to close the operational gap.

“Longer term, AI security cannot sit as a standalone tool beside the rest of the stack”, says Sanchez. “It must integrate with identity, data security, CNAPP, SASE/SSE, application security, and SecOps. The implication is that enterprises should separate two questions: how AI helps security teams, and how the enterprise secures AI itself. Both matter, but they are not the same problem.”

Top-down approach

Hamane notes that cybersecurity has historically been viewed as an issue that only needed to be addressed by the technology stakeholders within companies. However, AI has changed this – today, there must be a holistic view of what's happening in the business to align its ambitions with awareness of cybersecurity threats. He argues that companies will need to deploy a new generation of defensive tools to get ahead of the proliferation of AI in offensive cyberattacks, but notes that this will require more specialised personnel. Africa in particular is lacking in technology specialists, and Hamane notes that this could be a major area for progress, with universities establishing curricula focused on developing cybersecurity skills to help foster talent in this specialism across the continent, as well as start-ups focused on addressing the issue.

“We need to look at it as an ecosystem play - to encourage startups in different parts of Africa to work on this topic, [as well as] universities, companies, governments that need to fund these initiatives, to look at it as a sovereignty issue. You can have a breach in an airline, social security, a bank, and suddenly you can lose the trust of your customers, of your citizens. It's very difficult to come back after that.”

Regul-AI-tion

A major lever to strengthen the cybersecurity ecosystem is regulation – with AI advancing so rapidly, traditional regulation has lagged behind, and Hamane argues that a paradigm shift is required in this area. He calls for shorter regulation cycles which can be iterated upon quickly, with feedback integrated swiftly – otherwise it will never keep pace with a technology that effectively changes on a weekly basis.

Sanchez takes a pragmatic approach to the issue, arguing that while regulation can help, it can only do so if it creates clear accountability and minimum controls without turning AI security into a checkbox exercise.

“Good regulation can force visibility; high-risk AI systems may require documentation, risk management, human oversight, incident reporting, supplier accountability, and cybersecurity controls. That matters because many enterprises will not fully understand their AI exposure until they are required to inventory and govern it.”

He concurs with Hamane that the main challenge is speed - AI adoption is outpacing most regulatory processes, and overly prescriptive rules can quickly become outdated. The better model is outcome-based regulation supported by technical standards and cross-border cooperation.

However, ultimately, countries in emerging markets will only be able to meet the cybersecurity challenges presented by AI through familiarity and preparedness - regulation can raise the floor, but it cannot replace operational maturity. As Sanchez notes, policy can improve baseline accountability, but enterprises still need the architecture, skills, monitoring, and response capability to manage AI risk in real time.